Powerful New Capabilities for Domino Model Monitor (DMM)

Bob Laurent2020-09-04 | 5 min read

By Bob Laurent, Senior Director, Product Marketing, Domino Data Lab on September 04, 2020 in Product Updates

This week we announced the latest release of Domino’s data science platform, Domino 4.3, which aligns with critical investments that IT is making in infrastructure and security to support the needs of enterprise data scientists and data science at scale. We also announced powerful new capabilities for our exciting model monitoring product, Domino Model Monitor (DMM). DMM, which was introduced with Domino 4.2 in June 2020, lets organizations automate the monitoring of machine learning models in production to reduce the risk of financial loss and degraded customer experience.

Machine learning predictions evolve with time as data in the world changes. This problem, known as “data drift”, can degrade model accuracy, often going unnoticed until it negatively impacts business outcomes. DMM creates a “single pane of glass” to monitor the performance of all models across your entire organization, regardless of where those models were developed or deployed.

Model monitoring is especially critical during today’s unprecedented times; companies’ vital models were trained on data from a vastly different economic environment, when human behavior was “normal”, pre-COVID. With DMM, you can identify production data that has changed from training data (i.e., data drift), missing information, and other issues, and take corrective action before bigger problems occur. To learn more about model monitoring and some best practices you can follow to ensure your models are performing at their best, check out this recent blog.

We have seen lots of customer interest in DMM already with both existing Domino customers and well as net-new prospects who view DMM as central to their model monitoring strategy. In Domino 4.3 we’re adding powerful capabilities to DMM to make it even easier to maintain high-performing models.

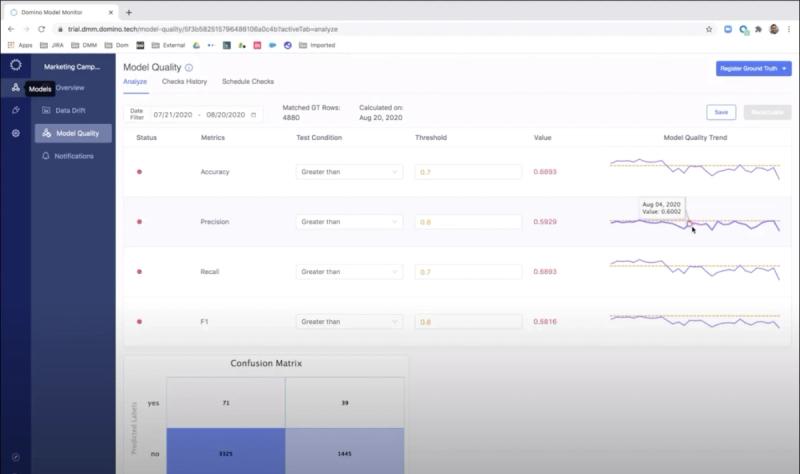

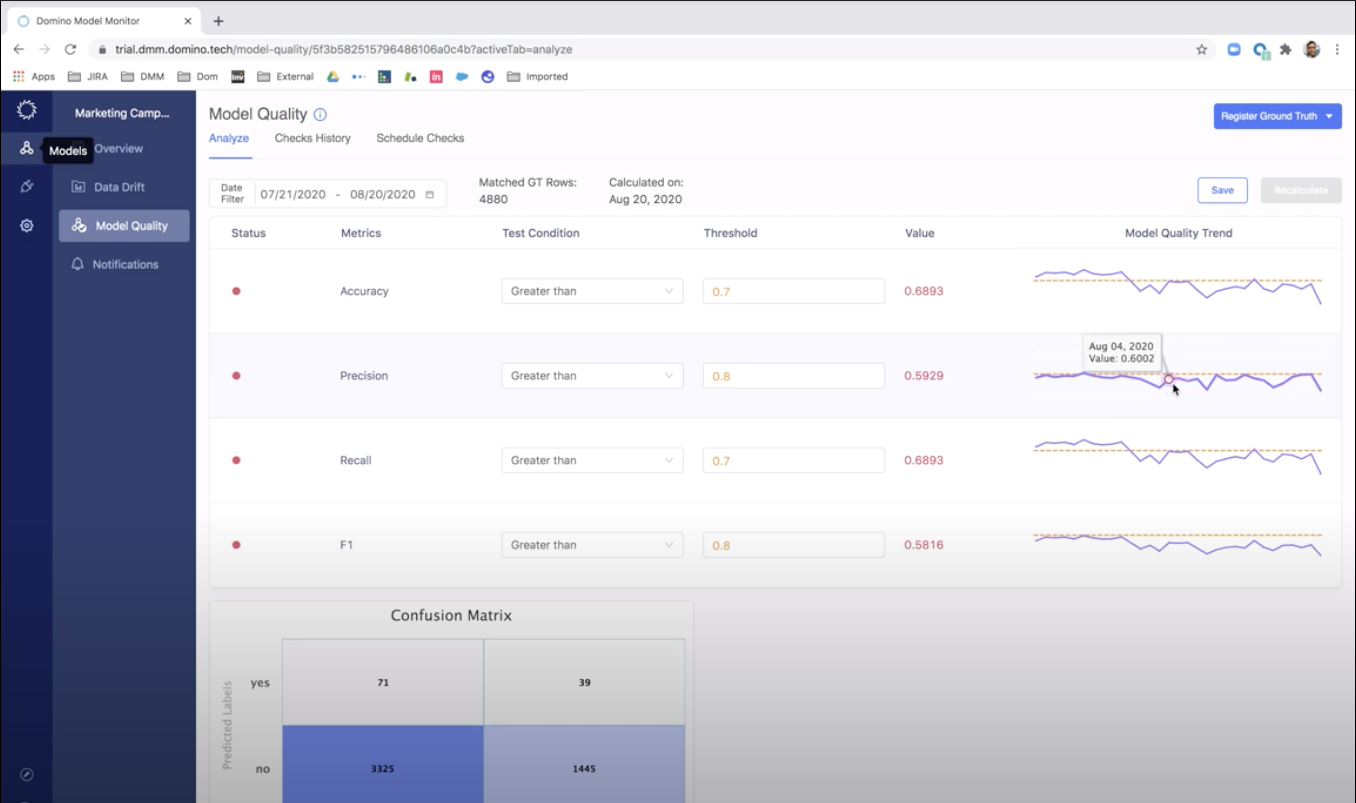

New trend analysis capabilities

DMM can measure the amount of change that a model has experienced since it’s been trained, and provide alerts if the change exceeds a threshold you set. This ongoing monitoring capability frees you (and other data scientists and ML Engineers) from having to constantly monitor every model you have in production, and focus on other value-added work. Now, after you receive an alert and want to investigate further, new trend analysis capabilities provide insights into how the quality of a model’s predictions (the output of a model) have been changing over time. This analysis can highlight if recent model degradation was due to a one-off event (e.g., stock on-hand shortage, temporary store closing, data pipeline issue, etc.) or if there has been progressive degradation.

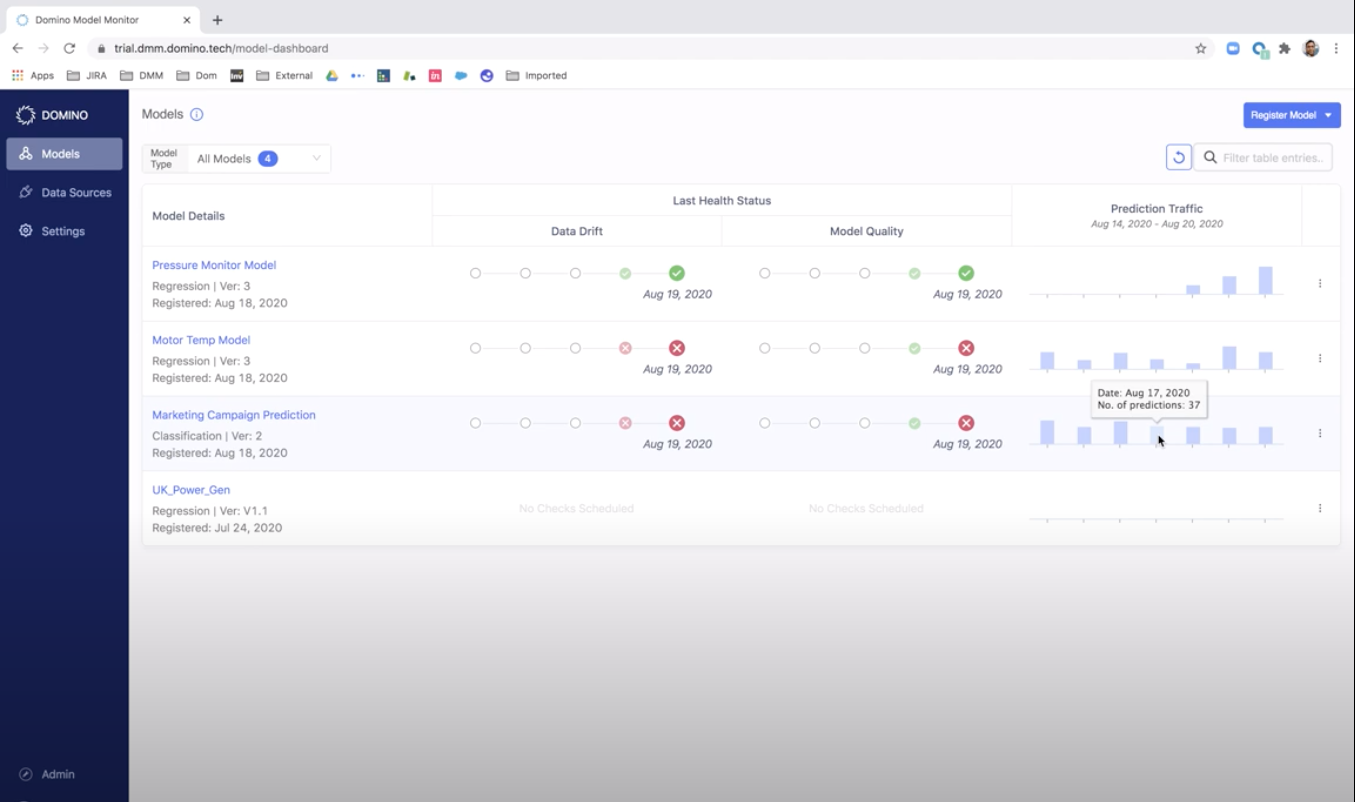

New traffic charts

New traffic charts provide insights into the volume of a model’s predictions and ground truth data over time. These charts can highlight if particular models have been unusually active or inactive to facilitate troubleshooting. Traffic charts, when used in conjunction with data drift analysis capabilities, can help you effectively triage potential model degradation problems. With this capability, you will now have greater insights into not just the volume of predictions made by the models, but also how much ground truth data is being generated on different days for each of them.

Next steps

Model monitoring is an emerging discipline that addresses some of the challenges that companies face with deploying machine learning at scale. We’re excited to be at the forefront with DMM, and we’re dedicated to helping data science and MLOps teams optimize health and processes across their models.

For more information about model monitoring and how DMM addresses the most common problems that companies have with maintaining high-quality predictions, check out our new whitepaper on best practices. You can also watch a replay of a recent webinar where we discussed the problems that data drift can cause and also provided a detailed demo of DMM. If you’re ready to try DMM, we’ve made a free trial available so you can learn even more about DMM and experience its latest capabilities with your models in production.

Bob Laurent is the Head of Product Marketing at Domino Data Lab where he is responsible for driving product awareness and adoption, and growing a loyal customer base of expert data science teams. Prior to Domino, he held similar leadership roles at Alteryx and DataRobot. He has more than 30 years of product marketing, media/analyst relations, competitive intelligence, and telecom network experience.

RELATED TAGS

Subscribe to the Domino Newsletter

Receive data science tips and tutorials from leading Data Science leaders, right to your inbox.

By submitting this form you agree to receive communications from Domino related to products and services in accordance with Domino's privacy policy and may opt-out at anytime.