Machine Learning Operations (MLOps) is a term that has gained popularity in the last ten years and is often used to describe a set of practices that aims to deploy and maintain machine learning (ML) models in production, reliably and efficiently.

However, that definition has shown time and again to be too narrow since it leaves out crucial aspects of the data science lifecycle, including the management and development of ML models.

With today’s business challenges, model-driven business should aim to adopt Enterprise MLOps. We define Enterprise MLOps as a "system of processes for the end-to-end data science lifecycle at scale. It provides a venue for data scientists, engineers, and other IT professionals, to efficiently work together with enabling technology on the development, deployment, monitoring, and ongoing management of machine learning (ML) models. It allows organizations to quickly and efficiently scale data science and MLOps practices across the entire organization, without sacrificing safety or quality.”

But, it's worth noting that this holistic view of MLOps was only possible after learning from the successes and best practices of another discipline: Development Operations (DevOps).

As its name indicates, DevOps is the combination of software development (Dev) and operations (Ops) to speed up the development cycle of applications and services. The most significant changes introduced by DevOps have to do with leaving silos behind and fostering collaboration between software development and IT teams, as well as implementing best practices and tools aligned with achieving the objectives of automating and integrating the processes between stakeholders.

While the goals of DevOps and MLOps are similar, they are not the same. This article will explore the differences between MLOps and DevOps.

Why MLOps

ML models are built by data scientists. However, model development represents a small fraction of the components that comprise an enterprise ML production workflow.

To operationalize ML models and make the models production-ready, data scientists need to work closely with other professionals, including data engineers, ML engineers, as well as software developers. However, it’s very challenging to set up effective communication and collaboration across the various functions. Each of the roles has unique responsibilities. For example, data scientists develop ML models, and ML engineers deploy those models.

The nature of the tasks is also different. For instance, working with data is more research-oriented than software development. Effective collaboration is challenging, and poor communication can lead to significant delays in delivering the final product.

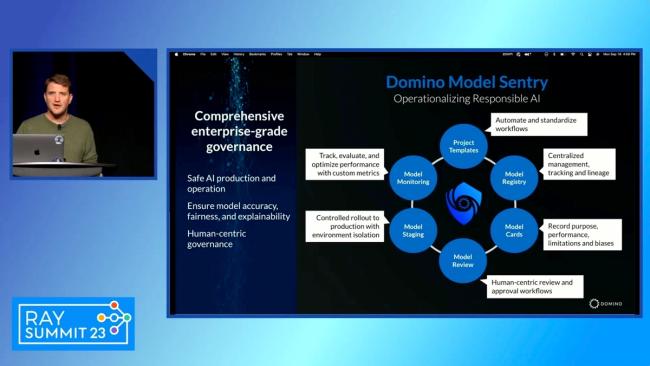

MLOps then, can be interpreted as a series of technologies and best practices that facilitate the management, development, deployment, and monitoring of data science models at scale. Enterprise MLOps takes these same principles and applies them to large-scale production environments whose models are more dependent on having systems of security, governance and compliance in place.

Differences Between MLOps and DevOps

There are several key differences between MLOps and DevOps including the following:

Data Science Models Are Probabilistic, Not Deterministic

ML models generate probabilistic predictions. This implies that their outcomes can vary depending on the incoming data and the underlying algorithms and architecture. Model performance can degrade quickly if the circumstances used to train the model change. For instance, most ML model performance suffered when the nature of incoming data changed drastically during the COVID-19 pandemic.

Software systems are deterministic and will perform in the same manner until the underlying code is changed, such as during an upgrade.

Data Science Has a Higher Rate of Change

DevOps lets you develop and manage complex software products through a set of standard practices and processes. Data science products and ML products are also software. However, they are difficult to manage as they involve data and models in addition to code.

You can think of ML models as a mathematical expression or algorithm trained to recognize specific patterns in the supplied data and generate predictions based on these patterns. The challenge when working with ML models lies in the moving parts necessary for its development like datasets, code, models, hyperparameters, pipelines, configuration files, deployment settings, and model performance metrics, just to name a few. This is why MLOps is necessary, the models are more than software, and require a different strategy for their development, monitoring, and deployment at scale.

Different Responsibilities

MLOps originated from DevOps. However, the responsibilities differ for each.

DevOps responsibilities include the following:

- Continuous integration, continuous deployment (CI/CD): CI/CD refers to the process of automating different stages of software development, including building, testing, and deployment. It allows your team to continuously fix bugs, implement new features, and deliver code into production so that you can ship the software with speed and efficiency.

- Uptime: this refers to the total time your server remains available and is a general infrastructure reliability metric. DevOps focuses on maintaining a high uptime to ensure the high availability of software. It helps businesses to conveniently operate critical operations without facing major service disruption.

- Monitoring/logging: monitoring lets you analyze the performance of applications throughout the software lifecycle. It also lets you effectively analyze the stability of infrastructure and involves several processes, including logging, alerting, and tracing. Logging provides data about critical components. Your team can utilize the information to improve the software. In contrast, alerting helps you to stay ahead of issues. It contains debugging information to help you solve problems quickly. Tracing provides performance and behavioral information insights. You can use the information to enhance the stability and scalability of apps in production.

In contrast, MLOps responsibilities include the following:

- Manage stage. This stage revolves around identifying the problem to be solved, defining what results are expected to be obtained, establishing the project requirements, as well as assigning roles and work priorities. Once the model is deployed in production, the results obtained during the monitoring stage are reviewed to assess the degree of success obtained. The successes and errors of this review can be used to improve the current project and future projects. In other words, this stage includes aspects related to:

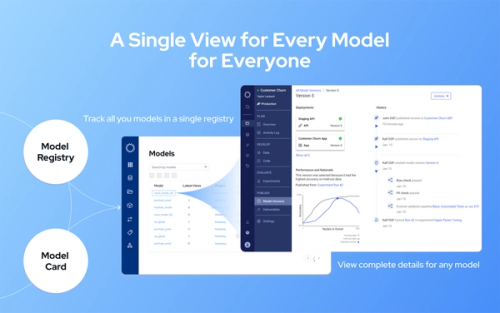

- Project management: Access control to resources such as information, central repository snapshots, status tracking, and more

- Enterprise-wide knowledge management: Crucial to improve efficiency through the reuse of key learnings. Domino's Knowledge Center is a good example of this type of central knowledge repository.

- Governance of technical resources: Includes cost tracking capabilities, role-based permissions for information access, tooling, and computing resource management

- Develop stage. During this stage, data scientists build and evaluate various models based on a variety of different modeling techniques. This means that during the development stage, data scientists can experiment and perform R&D using environments such as Domino's data science workbench, where they can easily access data, computing resources, tools such as Jupyter or RStudio, libraries, code versioning, job scheduling, and more.

- Deploy stage. Once a model proves successful, it is ready to be deployed to production and deliver value by aiding business process decision-making. To this end, this stage involves aspects including:

- Flexible hosting that allows data scientists to freely deploy permissioned model APIs, web apps, and other data products into a variety of hosting infrastructures quickly and efficiently.

- Packaging models in containers that can be easily consumed at scale by external systems.

- Support of data pipelines that allow the ingestion, orchestration, and management of said data.

- Monitor stage. This is the stage responsible for measuring the performance of the model and thus checking if it behaves as expected. In other words, it evaluates if the model is delivering its expected value to the business. Ideally, this stage provides the MLOps team with functionalities including:

- Pipeline verification which allows testing and deploying scoring pipelines via CI/CD principles

- Tools that facilitate A/B testing of model versions in production and track results to inform business decisions

- A model repository that brings together all the model APIs and other assets so that aspects such as their health, usage, history, etc. can be evaluated.

- Tools such as Domino's Integrated Model Monitoring that carry out comprehensive monitoring of all the organization's models as soon as they are deployed and that also proactively detect and alert you of any problem.

- Mechanisms that facilitate retraining and rebuilding models using the history and context of the original model.

MLOps and DevOps: Overlapping Responsibilities

While MLOps and DevOps differ, they also have some overlapping responsibilities:

- Both disciplines extensively use version control systems to keep track of changes made to each artifact.

- DevOps and MLOps vastly use monitoring to detect issues, measure performance, and perform optimizations on their respective artifacts.

- Both use CI/CD tools, although, in the case of DevOps, these tools are intended to automate the creation of applications. On the other hand, in MLOps they’re used to build and train ML models.

- DevOps and MLOps encourage continuous improvement of their respective processes. Likewise, DevOps and MLOps promote collaboration and integration between different disciplines for a common goal.

- Both disciplines require a deep commitment from all stakeholders to implement their principles and best practices throughout the organization

Wrapping Up

In this article, you learned how the responsibilities of an MLOps team differ from that of a DevOps team, as well as the overlap they share. Data science teams require high levels of resources and flexible infrastructure, and MLOps teams provide and maintain those resources.

MLOps serves as the key to success. It allows you to achieve your goals by overcoming constraints, including limited budget and resources. In addition, MLOps helps you enhance your agility and speed so you can be more model driven.

Subscribe to the Domino Newsletter

Receive data science tips and tutorials from leading Data Science leaders, right to your inbox.

By submitting this form you agree to receive communications from Domino related to products and services in accordance with Domino's privacy policy and may opt-out at anytime.