With data being quoted as the oil of the 21st century and data science being labeled as the sexiest job of the century, we're seeing a sharp rise in data science and machine learning applications in every field. In IT, finance, and business, predictive analytics is disrupting every industry.

However, as the volume of data continues to rise, one of the biggest challenges being faced by data scientists and ML engineers is how to store and process such large volumes efficiently. The problem gets even worse when they need to perform computationally heavy tasks such as feature engineering. To put it simply, infrastructure is currently acting as the limiting factor in the ML world.

So, in today's article, we'll be looking at how we can solve the problem of large-scale computing using Snowflake and NVIDIA's RAPIDS. Moreover, how DASK also plays a vital role in all of it.

A Little Background

Going with on-site computing was a no-brainer for most businesses a few years back. However, given the changing dynamics of the computing and storage demand, that model doesn't work as well anymore. There are issues when they need to scale, have data backups, and multiple data formats at once, to name a few.

To face these issues, some businesses went one step further and set up on-premise data warehouses. And whenever they wanted to accomplish computationally-expensive tasks like model training, feature engineering, and so on, they transferred all the required data to where the computing would take place. But not only is this very slow and expensive, but it also presents a variety of security issues for most.

However, the architecture we’re going to discuss in this article solves all these problems by bringing together the best from both worlds – data and computing.

Snowflake + Rapids – Why Is the Combination So Good?

Let’s talk about snowflake first. The Snowflake Data Cloud is a well-known cloud architecture that perfectly accounts for all the user’s storage needs. Conventionally, on-site storage solutions faced problems such as data scaling, security, governance, and so on. However, snowflake doesn't suffer from any of those. Not only can you scale up the volume conveniently, but you're also not limited to storing any particular type of data and can store whatever you throw at it.

Next up, we have NVIDIA, which has made a name for itself over the years for its continued excellence in the field of hardware and software regarding heavy computing. Among its various successful projects, one such is RAPIDS – an open-source, GPU-enabled platform where data engineers can accomplish process-extensive tasks while achieving up to 100X speedups.

One of the great things about RAPIDS is that it comes with all the commonly used APIs, such as pandas and scikit-learn, so the engineers can focus on their use cases instead of worrying about the implementation.

This combination of Snowflake and RAPIDS, when used for training end-to-end machine learning models that require heavy computing, completely puts the conventional methods to shame in terms of speed. However, the complete integration requires one more tool – Dask. Further in the article, we will see how DASK helps us achieve this end-to-end architecture.

Where Does Dask Come into Play?

Wondering why we need Dask? Well, Dask is amongst the most well-known framework in the data science community for parallel computing. It helps speed tasks up by processing them in a distributed manner. Luckily, Dask supports most of the APIs that data scientists need, such as Pandas, sklearn, and even RAPIDS in this case.

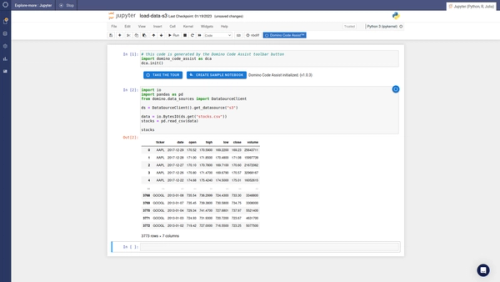

Here’s how we can use the Dask-Snowflake package to seamlessly import all your data to Dask dataframes from Snowflake.

from dask_snowflake import read_snowflake

query = "Select * from SAMPLE_DATA LIMIT 100"

df = read_snowflake(query= query,

connection_kwargs=DB_CREDS)

Query Pushdown to Snowflake from Dask & RAPIDS

The process starts when the user sends a query from the Dask client to Snowflake for processing. Once the query is sent and processed in Snowflake, the client receives the metadata for all the SQL result sets in the form of a response.

It's important to note here that usually client-side can only process one chunk of the result set at a time. However, thanks to Apache Arrow, which Snowflake uses, multiple result sets can be sent acting as independent workers and can be computed in parallel.

Moving on, the metadata that the Dask client receives above is used to initialize parallel reading of result sets from Snowflake, resulting in a high-speed data retrieval. It's worth mentioning here that you can do all of this while staying in your Python environment, which is a significant highlight of this architecture, making it very useful for data scientists.

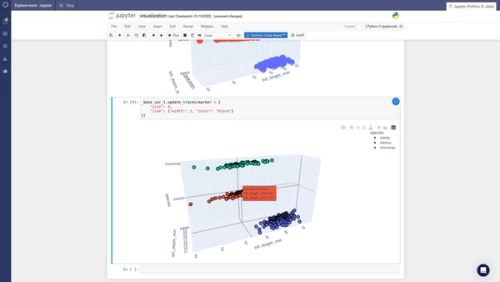

Finally, once the result sets are obtained in the form of Dask dataframes, you can process them using RAPIDS while leveraging the lightning-fast speeds that GPUs are known for. With that, we complete our end-to-end machine learning processes - be it training, validation, or feature engineering.

Some Benefits of Distributed On-Demand Computing

Shifting to distributed, on-demand cloud computing is not only hassle-free but is also relatively cost-effective for a business. While the on-premise infrastructure might perform somewhat better in terms of cost if the company is large-scale, it demands a huge capital upfront, not to mention the time it takes to set up.

However, that’s not all. There are many other aspects of distributed cloud computing that you might be unaware of. Let’s go through them:

1. Unlimited Storage

As much as you would like to believe otherwise, there will always be issues with storage for on-site computing in the long run. Even if you're not a data-hungry organization, which is seldom the case nowadays, there will always come a time when you're out of space. And when that happens, it will certainly not be an easy task to get extra storage when compared to cloud computing, where you virtually get to enjoy unlimited storage.

2. Quick Deployments

Cloud service providers work day and night to make your deployments seamless. Even though you have specialists for on-site computing as well, you couldn’t possibly compete with the likes of cloud giants such as Amazon and Microsoft, right?

So, once you signup for cloud computing, it goes without saying that deployments are going to be as simple as a mere click. This saves a lot of human resources and adds to the cost-effectiveness of cloud systems.

3. Enhanced Security & Governance

Data governance and security always remain a big issue when you have on-site computing. Cloud vendors such as Amazon and Microsoft put in extra effort to make sure you don't face any problems regarding that.

However, just to put it out there, there have been security breaches in the past for the most prominent cloud computing vendors as well. But personally, they're still a lot lesser when compared to on-site.

Accomplished enterprise analytics technology leader with 10+ years of experience selling and implementing world-class analytics solutions for the largest organizations in North America. Proven track record of beating sales targets, achieving high close rate, and coaching sales and presales team members. Adept at building trust-based relationships with a keen ability to lead customers and channel partners through the sales process.

RELATED TAGS

Subscribe to the Domino Newsletter

Receive data science tips and tutorials from leading Data Science leaders, right to your inbox.

By submitting this form you agree to receive communications from Domino related to products and services in accordance with Domino's privacy policy and may opt-out at anytime.