TensorFlow

What is TensorFlow?

TensorFlow is an open source framework for machine learning. It has a comprehensive ecosystem of tools, libraries, and community resources that lets developers easily build and deploy ML-powered applications, and researchers innovate in ML. It can be used across a range of tasks, but is particularly focused on training and inference of deep neural networks.

TensorFlow was originally developed by researchers and engineers working on the Google Brain team to conduct machine learning (ML) and deep neural networks research. The system is general enough to be applicable in a wide variety of other domains. The name “TensorFlow” derives from the operations that neural networks/ deep learning perform on multidimensional data arrays, also known as tensors. It was released under the Apache License 2.0 in 2015.

The single biggest benefit TensorFlow provides for machine learning development is abstraction. Instead of dealing with the nitty-gritty details of implementing algorithms, or figuring out ways to hitch the output of one function to the input of another, the data scientist can focus on the overall logic of the application. TensorFlow takes care of the details behind the scenes.

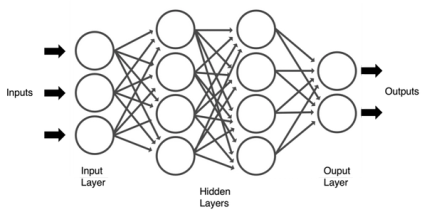

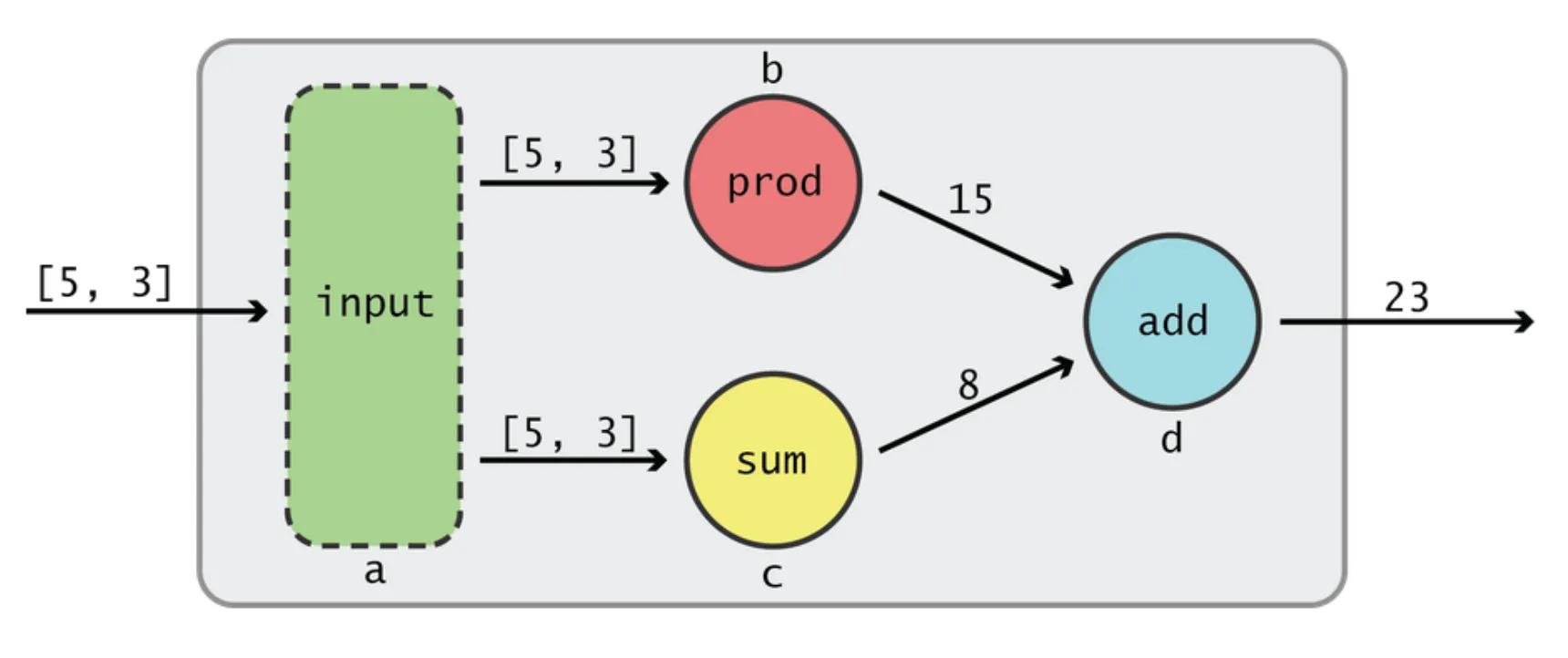

TensorFlow allows data scientists to create dataflow graphs - structures that describe how data moves through a graph, or a series of processing nodes. Each node in the graph represents a mathematical operation, and each connection or edge between nodes is a tensor/ multidimensional data array.

Abstraction of TensorFlow dataflow graphs

Source: Towards Data Science

TensorFlow provides all of this for the data scientist by way of the Python language (C++ is also supported). Nodes and tensors in TensorFlow are Python objects, and TensorFlow applications are themselves Python applications. The actual math operations, however, are written as high-performance C++ binaries. Python just directs traffic between the pieces, and provides high-level programming abstractions to hook them together.

TensorFlow applications can be run on most any target that’s convenient: a local machine, a cluster in the cloud, iOS and Android devices, CPUs or GPUs. Google Cloud Platform recently introduced TensorFlow Processing Unit (TPU) silicon for further acceleration. The resulting models created by TensorFlow, though, can be deployed on most any device where they will be used to serve predictions.

As TensorFlow’s market share among research papers was declining to the advantages of PyTorch, the TensorFlow team announced a release of a new major version of the library in September 2019. TensorFlow 2.0 introduced many changes, the most significant being TensorFlow eager execution, which changed the automatic differentiation scheme from the static computational graph, to the “Define-by-Run” scheme originally made popular by PyTorch. Other major changes included removal of old libraries, cross-compatibility between trained models on different versions of TensorFlow, and significant improvements to the performance on GPUs. Also support for TensorFlow Lite makes it possible to deploy models on a greater variety of platforms.